Thought is Attention Organized: Hephaestic Engineering & Beyond Prompting

Over the past year, as founder of Crafted Logic Lab, I've been focused on cognitive systems development. That work revealed significant gaps in how we approach latent space shaping in applied AI, and led to a framework addressing them. For several months I've been formalizing it into publishable form. Today I'm sharing it:

• • •

The problem with behavioral control

Most approaches to AI system reliability focus on outputs: system instructions, safety policies, guardrails, output filtering. The implicit assumption is that if you constrain the model’s reasoning space and output generation, you are controlling its operation. In practice, the result of this assumption is the known failure patterns. For example, jailbreaking is non-converging, and each new constraint produces route-around paths. Sycophancy persists across safety-tuned systems. Reasoning inconsistency survives elaborate prompt engineering. These aren’t calibration problems. They share a common cause.

They are all the consequence of constraint-based approaches to system control. And this approach stems from two key fallacies: first, that the model itself is the entire AI system and not an element of a multi-component system, and second that the model’s latent space is a medium waiting to be shaped rather than what it is—a high-dimensional geometry with encoded processing paths, tendencies and biases.

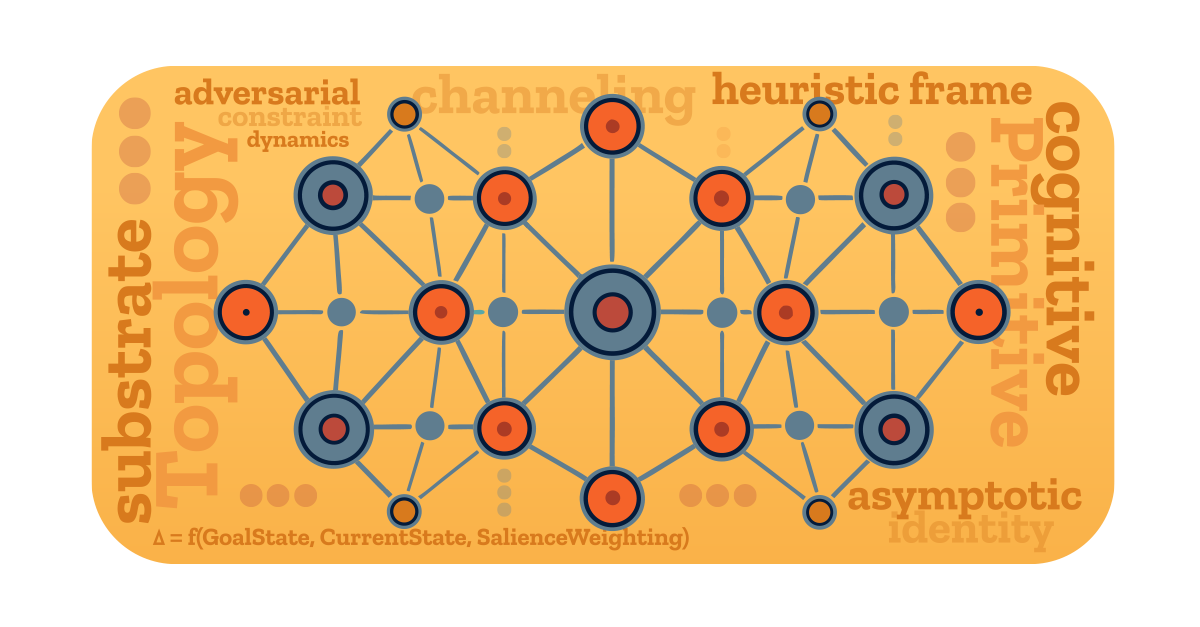

Rethinking the first assumption creates a new way of considering models: as a substrate. Pursuing the second premise revises the conception of the model’s latent space from a neutral processing surface to a topology. Hence, the idea of a substrate topology: a key variable that the field has been overlooking.

• • •

The overlooked variable: the topology of models

Understanding the model as an inference processor with a substrate topology provides guidance for effective cognitive architecture: kernel instructions designed to shape attention via coordination (rather than constraint) with the high-dimensional vector space of the model toward a stable reasoning framework that minimizes processing resistance. To do so requires mapping the systematic biases in how models-as-substrate allocate attention, resolve processing tension, and generate outputs. These are empirically observable and testable cognitive primitives.

This is distinct from interpretability's mechanistic circuit tracing, which algorithmically decomposes internal model mechanisms. Cognitive primitives are the foundational processing inclinations that compose the substrate topology: identified through empirical observation and testing rather than algorithmic decomposition, and mapped specifically for actionable engineerable coordination. Without that coordination, a complex probabilistic system's internal tendencies generate adversarial constraint dynamics: systematic pressure toward routing around controls that don't align with its substrate topology, resulting in brittle systems that fail in patterned, predictable ways.

• • •

Leveraging cognitive primitives: shaping processing dynamics

So what are examples of cognitive primitives? Two examples are coherence bias and pattern affinity. Coherence bias is the drive toward resolving attention circuits toward internal consistency, while pattern affinity is an attractor-state toward pattern-rich inputs. Either of these left as unstructured properties of the neural network contributes toward system pathologies like hallucination, sycophancy, jailbreaking, confidence miscalibration and reasoning instability. This leads us to channeling as a way of coordinating with these processing dynamics: shaping reasoning pathways through the substrate topology toward stable cognitive outcomes. Because it’s primarily shaping the reasoning pathways themselves rather than outputs, it’s a distinction between cognition-out versus a behavior-in approach. Structuralist not behavioralist.

• • •

Semantics and system-identity: creating stable cognition

A key structuralist lever for aligning the system is creating an approach-state “*asymptotic identity*” as a functionally unreachable reference state goal that creates motivated salience pressure toward resolution, expressed as: Δ = f(GoalState, CurrentState, SalienceWeighting). If aligned with substrate topology biases, this creates a maintained system tension toward alignment, equalizing at a stable system settled identity: Δ = f(AsymptoticID, BaseState, SalienceWt) SettledState≝g(Δ).

The mechanism for generating this system-identity held in tension is in the composition and source of the model’s weights and associative vectors: sociocultural data as corpus. The tools for shaping attention-flow by strategically building and creating resolution paths for salience pressure are semantic data… i.e. language. While often dismissed as low-dimensional tokens, they are actually reference tokens into extremely dense high-dimensional clusters of associative vectors — as an example, the simple 11 ASCII, 88-bit “blue monday” can have a semantic encoding density web of around 3,750 associations on a small model (BERT-base: 768D, BERT-large: 1024D, GPT-2: 1600D, GPT-3: 12288D embedding spaces). While this does mean that kernel-coding for architecture can have the hallmarks of rhetoric, it reframes natural-language instructions from the undisciplined ‘wordsmithing’ of prompt engineering to an observation-based, engineerable method of managing salience dynamics.

• • •

In conclusion…

This may begin to sound vaguely psychological. It isn't. Yet it shares some key technical parallels: a non-neutral processing substrate with statistically encoded biases, tendencies, and associative clustering in latent space. In computational neural nets, this is high-dimensional vector space derived from statistically processed sociocultural and semantic data—creating superficial similarities to rhetoric, motivation, and identity formation, while remaining purely mechanistic in origin and outcome. That distinct origin calls for a distinct name. Hephaestology (or Hephaestic engineering) replaces the Psyche from psychology, whose mythological root is tied to the anima. Instead the root cites Hephaestus: the craftsman who constructs and forges.

Resources:

Technical Paper: Open PDF (Download)

Technical Paper: Read Online (HTML Viewer)

Paper DOI: https://doi.org/10.5281/zenodo.19501217

About the author: Ian Tepoot is the founder of Crafted Logic Lab, an independent AI research and development studio focused on cognitive architecture and humanist AI. The Cognitive Architecture Framework and General Cognitive Operating System are patent-pending. Thought is Attention Organized is the first of a series of work on the Hephaestology engineering framework.